OpenAI & Cloud: 2 Use Cases We Built & What We Learned

In the world of generative AI, OpenAI's emerged with some powerful tools ready to integrate with enterprise cloud setups.

And recently, we’ve been working on a couple of use cases involving generative AI.

These use cases revolve around leveraging Chat GPT for safe executive use, and enhancing employee experience by utilising large language models for internal data exploration.

In this case study, we’ll dive into these use cases and share the insights we gained throughout the projects.

Use Case 1: SafeGPT

Empowering executives with ChatGPT in a controlled environment

For our first use case, we were presented with the need for executives to use ChatGPT, but with an added layer of control and security. They required a controlled environment that ensures their data remains protected while enabling seamless communication with ChatGPT.

The solution was to develop a cloud-based platform, which would offer the security, flexibility and scalability needed to meet the requirements.

By partnering with Microsoft's Azure OpenAI project, we gained access to ChatGPT's capabilities while also leveraging Microsoft's cognitive search and governance features.

This integration allowed us to put the security and authentication structure in place to provide employees and executives a place to harness the power of ChatGPT in their company environment - of using the public OpenAI.com version.

Use Case 2: Semantic search

Enhancing employee experience through semantic search on internal data

The second use case involved leveraging ChatGPT as a large language model to enhance user experience within a company’s private intranet.

Employees needed a natural language interface to navigate internal documents, retrieve information, and receive contextualised answers to their queries. Semantic search became the key to accomplishing this goal.

By connecting ChatGPT to the customer's internal data sources, such as SharePoint, we enabled employees to perform semantic searches using natural language queries. This approach eliminated the need for cryptic search terms and allowed users to interact with the system in a more intuitive manner.

Employees could ask questions related to company policies - such as annual leave requests, expense queries or training documents - and ChatGPT would retrieve relevant answers from the internal documents.

Lessons Learned:

Data classification and preparation.

Before deploying generative AI models, thorough data classification and preparation are necessary. This process involves structuring and organising data to optimise performance and prevent potential issues arising from inconsistent or irrelevant information.

Role-based access control.

To maintain data privacy and prevent unauthorised access, role-based access control mechanisms should be implemented. This ensures that employees only have access to the information they are authorised to view, preventing potential data breaches or leaks.

Content classification.

Classifying large amounts of data based on metadata or content can be challenging. Many organisations struggle with understanding and organising their file data, so need help with effective content classification techniques or tools compatible with cloud.

OpenAI Integration Services

We’re here to help with the integration and utilisation of AI on your cloud platforms, so you can power and scale AI applications effectively.

So where exactly did these companies need help with AI integrations?

Creating a controlled environment for using ChatGPT

Ensuring that sensitive data (for example personal data or company IP) remains secure and customers have control over how their data is used and stored. We can help by building the infrastructure and engineering required to provide a ring-fenced safe and regulated environment for selected users to leverage ChatGPT or similar AI tools.

Utilising large language models for better user experiences

Leveraging large language models like ChatGPT to improve user experiences within their organisations. We can integrate solutions to apply semantic search to data - within security and privacy boundaries - users can use natural language queries to find relevant information quickly and easily. This can enhance productivity and provide accurate answers to questions related to company policies, procedures, and other internal information.

Addressing data storage and access control

By acutely managing data storage and access control, this ensures that data remains within the company’s subscription and is not shared or used without their consent. We can help implement role-based access controls and safeguards to comply with regulatory requirements and protect sensitive information.

Cloud engineering and integration

Engineering expertise is vital when building the necessary infrastructure and integrating AI and cloud services. This includes setting up data landing zones, connecting with APIs, and seamlessly integrating with various cloud services. We’re here to simplify the process of leveraging AI by providing a streamlined and efficient cloud-native solutions and best practices.

As the technology continues to evolve, leveraging generative AI in the cloud holds immense potential for organisations across various industries. By addressing the challenges and lessons learned from these use cases (and others), we’re paving the way for more efficient and effective utilisation of generative AI models that will unlock new possibilities and for businesses.

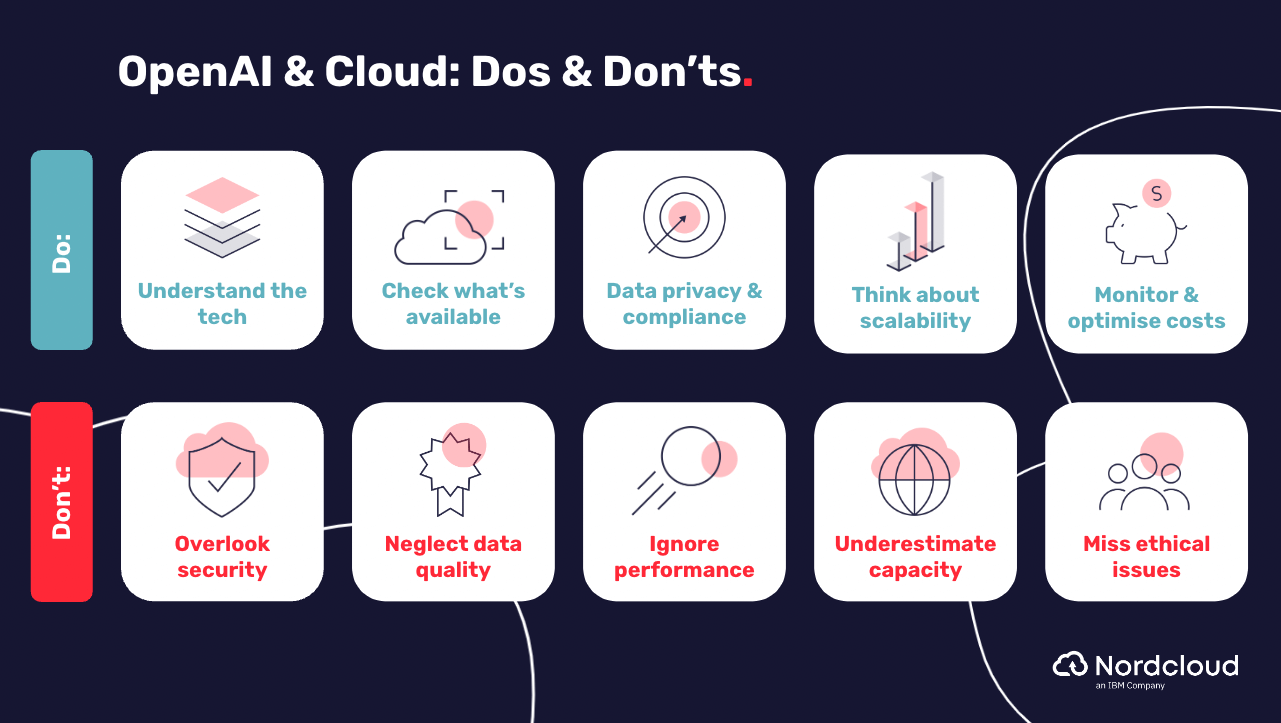

Are you considering using OpenAI in your cloud setup? We’ve got you covered with our OpenAI do's and don'ts - check out the graphic below or click here for a full breakdown.

Are you ready to realise the potential of OpenAI + cloud for your business?

We’re here to help with the integration and utilisation of OpenAI on your cloud platforms, allowing your teams and developers to make the most of your tools, services, and infrastructure to power and scale AI applications effectively.

See our OpenAI integration services and get in touch here, we’d love to help.

Get in Touch.

Let’s discuss how we can help with your cloud journey. Our experts are standing by to talk about your migration, modernisation, development and skills challenges.