Navigating Your Application Modernisation Journey. A Case in Point: Platform and Software Modernisation.

In the fast-evolving landscape of technology, many organisations still rely on legacy projects that are built on old infrastructure and use technologies that are not current. The need to modernise applications has become a pressing priority.

Cloud modernisation is enabling cutting-edge innovations to help companies stay ahead of the curve. But organisations can’t make the most of these rapidly maturing technologies unless they establish the right IT foundation.

As enterprises evolve to navigate disruption and accelerate innovation, their technology architecture needs to mature as well. In this blog we’ll present a typical application modernisation journey for enterprise needs.

Cloud Application and Database Modernisation scenario explained

To illustrate the practicality of modernisation, let's delve into the real-time experience of modernising a complex set of applications for an organisation that provides and operates critical services. The applications as-is technology stack is on Java (older version), DB2.

The application itself is also hosted on an EC2 instance in AWS cloud. We would look into modernising the application software stack (to latest Java version), modernising DB2 to use AWS RDS PostgreSQL, replacing the licensed application server with Tomcat, containerising applications, and moving from manual builds to leveraging CI/CD.

Collaboration Fuels Cloud Modernisation

Cloud modernisation initiatives involve coordinated teamwork, engaging various project stakeholders such as Business/Product, IT/Infrastructure, DBA, Architects/Developers, testing and support teams. The goal is to establish a project plan, an essential blueprint that includes the creation of a High Level Design (HLD) and Low-Level Design (LLD) documents.

These documents encompass every facet of design details, from existing infrastructure to future infrastructure specifications, as well as the existing and modernised platform details and application technical stack specifics.

Some of the key aspects of implementing modernisation include:

Upgrading Cloud Infrastructure

Modernisation begins with optimising infrastructure utilisation, particularly in terms of compute and storage. This could involve transitioning from an EC2 server to an ECS cluster with Fargate compute, running applications as Fargate tasks within an ECS cluster.

Leverage Cloud Native Services

Enhancing email services by replacing Outlook SMTP with AWS native SES (Simple Email Service) SMTP, using cloud native database software-as-services such as AWS RDS, cloud storage etc.

Reduction in Licensing Costs

Modernisation often includes upgrading servers and other software components to reduce licensing expenses. An example is migrating from a licensed application server to the open-source format such as Tomcat and TomEE server for EJB applications.

OS and Software Upgrades

Ensuring project structure is compatible with Maven, addressing vulnerabilities in older libraries, and upgrading to the latest software versions such as Java, supported container images, and the latest RedHat OS.

Version Control and Streamlined Deployment

Transitioning from legacy source control (such as CVS to Git), harnessing the latest automation features in the build and deployment processes. Implementing a deployment pipeline for automated deployment of all applications and their sub-modules using GitHub Actions.

The following section delves into the implementation factors taken into account for the modernisation project.

Cloud Infrastructure: Compute and Storage

Consider an existing web application which is hosted on a cloud compute instance (such as EC2 instance(s)) and uses a licensed version of the application server and your modernisation objective is to move away from a licensed model to more of open-source such as Tomcat environment.

While doing this, an inherent need of containerising the application needs to be considered as well. In such transition cases we observe that optimising both compute and storage resources are very much essential from an operational perspective.

How do we start?

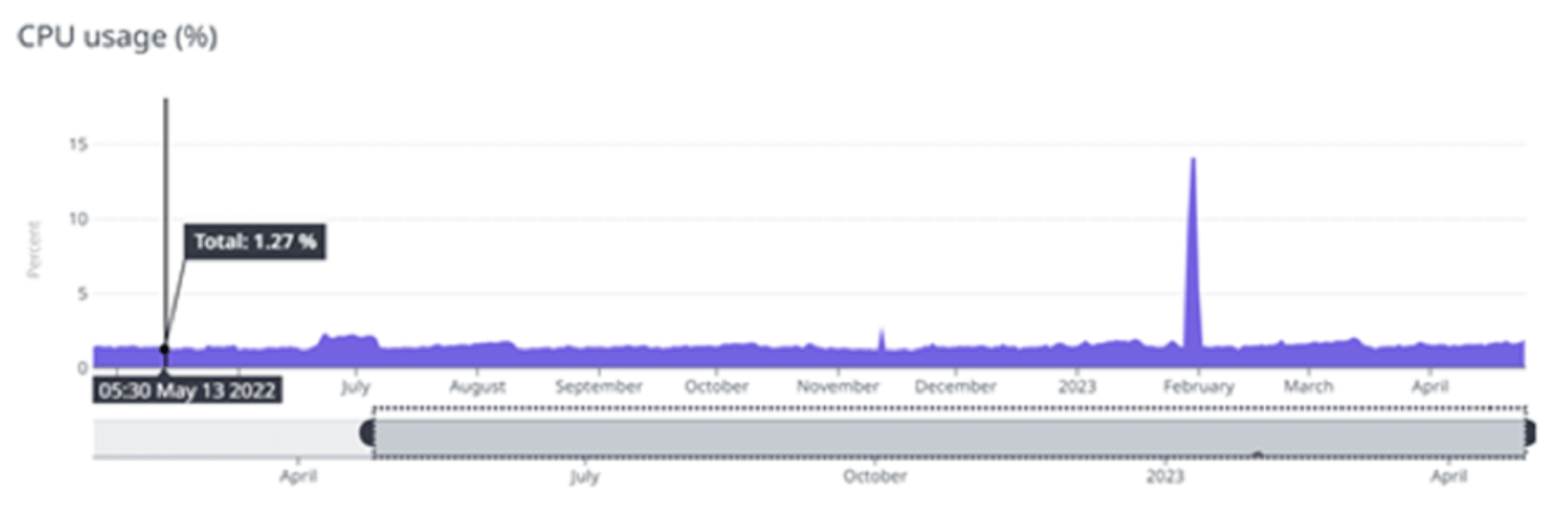

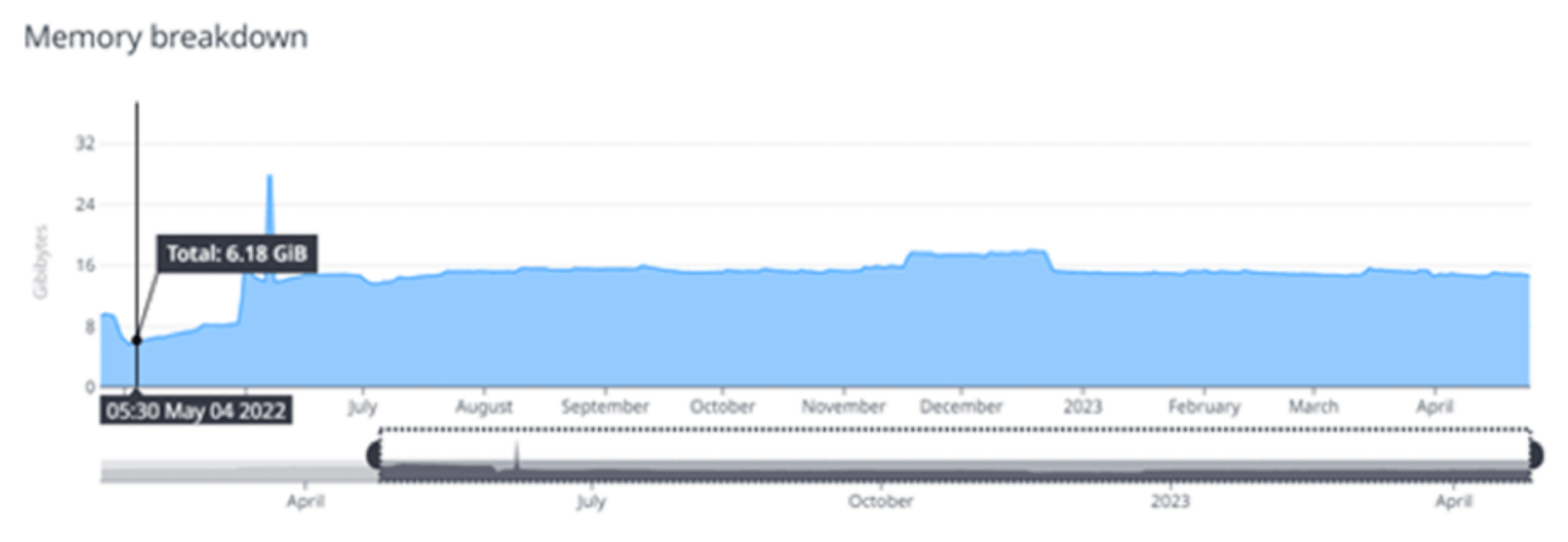

A typical approach would be to examine the utilisation metrics of existing infrastructure resources (such as EC2 instance(s) that host the application server. The utilisation metrics can be obtained using cloud native monitoring reports or third party tools such as Datadog, Prometheus etc.

If resources are frequently underutilised (ex. peak CPU and memory utilisation is always < 40% over a period of 9-12 months), then it’s recommended to downgrade the infrastructure. This will help release incurring costs of idle compute resource(s).

On the other hand, if the resources are frequently hitting the level above threshold (ex. peak CPU and memory utilisation is always >80% most of the time over a period of 9-12 months) then adjusting scaling up CPU and RAM is recommended.

One of the approaches in addressing a substantial gap between resource allocation and actual utilisation is to containerise the web application (use an ECS Fargate setup). Additionally, for the storage of logs, static images, and other pertinent files, we can use EFS as a storage solution that seamlessly integrates into ECS cluster(s). This approach promises to enhance resource efficiency and reduce operational costs.

Illustration 1: CPU Utilisation Metric

Illustration 2: Memory Utilisation Metric

From the monitoring report view a breakdown of CPU and memory utilisation can be arrived as below:

| EC2 | Max | Min | Avg |

| CPU Usage | 0.32% | 8.7% | 0.65% |

| Memory Usage | 18.3GiB | 46.1GiB | 20.2GiB |

Modernised infra compute details:

From the gathered metrics and following the application modernisation process, it’s recommended to reallocate compute and storage resources. It's evident from the above example that there can be significant reduction in the amount of compute capacity required to run a similar suite of applications effectively.

As part of this transformation, you can also introduce a multi-AZ DB cluster (such as AWS RDS Postgres), effectively replacing a set up of replicated r5b.2xlarge instances, each with a substantial 2.5 TB storage capacity.

To further streamline operations, you can implement an ECS task for running the application on AWS containers such as Fargate, featuring a configuration with 4 vCPUs and 16 GB of memory. In terms of data storage, adopted EFS Standard/One Zone, which effectively serves as our repository for pictures and static file content storage.

The modernised database was maintained in Multi-AZ Db cluster to ensure high availability , the storage type is IOPS SSD which facilitates multiple writes to the database.

However, lower environments like the DEV and Test can be kept in a single AZ so as to optimise cloud resources, Multi AZ availability can be adopted for Preprod and Prod environments.

Network and Security

How can you determine network and security prerequisites for a modernisation scenario? This often involves a comprehensive review of the existing network and security infrastructure, followed by discussions with the respective teams. Ultimately, you can create an architecture diagram tailored to the project's needs.

Here are the key considerations we made regarding network and security level changes:

1. Accounts and Environments:

Identify the number of environments required for the project and decide whether to use a single AWS account for all environments or separate accounts for each (e.g., DEV, PrePROD, PROD).

2. VPC (Virtual Private Cloud):

Determine the VPC and CIDR ranges necessary for your applications based on the required number of addresses per environment.

- AWS resources are launched in logically isolated virtual networks defined by VPCs. For example segregating VPCs, one for each of the PROD, PrePROD, and DEV environments, spread across three different accounts (along with CIDR for example, CIDR range set to a /23)

3. Subnets:

Define the need for public and private subnets within each VPC created for your modernised applications. Public subnets have direct access to an internet gateway, allowing resources to reach the public internet, while private subnets require a NAT (Network Address Translation) device for such access.

- It is recommended to have the subnets in accordance with VPC environment isolations. For example, three private subnets and one public subnet within each VPC. Equipping subnets with required NACL (Network Access Control List) rules to manage inbound and outbound traffic should also be aligned with environment isolations

4. Security Groups:

Identify the security group (SG) requirements based on the types of users accessing modernised application environments. SGs serve as firewalls controlling inbound and outbound traffic to and from resources in your VPC.

- For applications, it’s recommend to have distinct security groups suiting the needs, as outlined below:

- Global Protect VPN-SG (Users using global protect VPN for private VPN connectivity ex., connecting home-office)

- Apps-ALB-SG (Security group of Application Load Balancer)

- Apps-App-SG (Security group of custom application modules)

- Application Load Balancers (ALBs) with ECS services serving as the target groups. ECS clusters can be set up in private subnets, utilising Fargate capacity to run the main web application on a web server. ALB Session timeouts are also an important consideration that help prevent gateway timeout during the integration system calls. In most scenarios, a 10-30 second timeout is sufficient with an upper bound of 60 second timeout

- Maintain session stickiness at the application load balancer level to ensure that calling apps shall have session information during interactions with the main applications.

Database Considerations

Every application, without exception, performs the crucial functions of collecting, storing, retrieving, and managing data within a database. Traditionally, this database is predominantly a relational database management system (RDBMS) hosted on a dedicated server.

It’s common to have an imperative task of advancing and modernising databases (case in point: DB2) to consider adopting a PaaS approach instead of databases hosted on a EC2 compute instance. This scenario calls for transitioning from a dedicated database server to a multi-Availability Zone (AZ) database system (such as PostgreSQL).

The journey toward database modernisation encompasses several distinct stages, and it's important to note that these stages need not be approached in an all-or-nothing or strictly sequential manner.

The stages in the database modernisation journey include:

| Migration Path | Complexity of Migration | Cost Benefit | Agility |

| Lift and shift | Low | Low | Low |

| Move to managed | Low | Medium | Medium |

| Modernise | Medium | High | High |

Lift and Shift (Self-Managed):

This initial stage involves migrating a database server from an on-premise data centre deployment to AWS Cloud with minimal alterations. The goal is to ensure a smooth transition while retaining the existing management responsibilities.

Move to Managed (Homogeneous Migration):

In this stage, the migration involves transitioning the database engine to a managed cloud database instance / solution. This approach reduces administrative overhead while maintaining consistency with the existing database technology.

Modernise:

The third stage, commonly involves migrating from a self-managed database. If the relational database aspects have to be considered then it is recommended to compare and contrast equivalent managed relational database offerings.

The evaluation would help modernise self-hosted database engines to the managed instances (ex., PostgreSQL on AWS). This process encompasses a range of modifications, such as: updates at code level, adjustments to connection pooling, resolving data type compatibility issues.

Common Challenges Encountered:

1. Data Type Conversion: Data type in DB2 does accept a SmallIntT type field under a BOOLEAN type column and automatically typecasts DATE type to STRING type as needed. Hence changes might be required to use AWS PostgresSQL:

//Datatype compatibility issue with AWS PostgreSQL

updateStmt.setBoolean(4, localVariable.isMinus());

//possible Compatible data type change

updateStment.setInt(4, localVariable.isMinus()?1:0); 2. For localisation requirements, the case conversions specifically for languages such as Finnish require changes to be compatible with AWS PostgreSQL

if (nameParam != null && !nameParam.equalsIgnoreCase(“”))

{

queryParams.setName(nameParam.toUpperCase());

}3. Rounding Algorithm in DB2 and AWS PostgresSQL use different rounding algorithms. DB2 uses ROUND_HALF_EVEN, while Postgres uses HALF_ROUND_UP. Adjusting the results to suit business needs should be considered.

Upgrading Java/Software stack

Replace local storage with AWS S3

There might be business requirements to store application generated reports onto a local directory structure, and accessing these directly from EC2 compute instance(s).

You can mount EC2 local directory to S3 bucket and allow users access to the buckets using S3FS by configured access role(s). S3FS is a FUSE-based file system that allows you to mount an S3 bucket as a directory on your EC2 instance.

Upgrade JDK version

Older versions of the JDK might pose a significant risk to your applications. Newer JDK versions lead to improved application performance, reduced memory consumption, and faster execution of Java applications. Staying on a supported version ensures that you receive updates, bug fixes, and security patches for an extended period, providing stability for your applications.

Migrating from CVS to GitHub

If your application's code base is previously stored and managed on a CVS version control repository, as part of modernisation, you can easily transition to GitHub. While doing so, it is important to consider the commit and code history as well. Incorporating GitHub and Maven projects in your modernisation journey, shall provide flexibility to configure automated build and deployment pipeline via GitHub actions.

Need some help getting your cloud modernisation journey right the first time?

At Nordcloud, we’ve built our modernisation services to partner with organisations and overcome these kinds of challenges. If you’re already starting your modernisation journey on cloud, you need to make sure all aspects considered beginning from planning, execution and smoother operational aspects. Nordcloud can support you in the whole journey in an effective way.

If you have any questions about this article or would like more information about our cloud modernisation and Transformation services, get in touch with us directly at murthy.sistla@nordcloud.com or max.mikkelsen@nordcloud.com.

Get in Touch.

Let’s discuss how we can help with your cloud journey. Our experts are standing by to talk about your migration, modernisation, development and skills challenges.