Using Machine Learning to Generate a User Interface

What if you could turn drawings into a website in the blink of an eye? How much easier would it make developers’ lives? Nordcloud UX developer Ari Aittomäki decided to find out.

Optimizing design systems and saving everyone’s time

It was a video from AirBnB that first inspired Ari. Sitting at our Jyväskylä office, Ari was looking for a thesis topic when he came across AirBnB’s ambitious feat of turning hand-drawn images into a mobile application interface. Their tool: machine learning.

Ari saw the value of this immediately. In meetings with busy clients, he could generate a website on the spot using nothing but a video camera and drawings on a whiteboard.

"The idea is this: you’re in a meeting with your client and plan their website by drawing pictures. You use simple sketches, like a box with an X for an image. Normally, each viewer would interpret the drawings differently in their heads. But now, there would be a TV next to you generating the user interface in real-time. Importantly, it would use existing components from the client’s design system. It would already be as close to the final result as possible," Ari explains.

Ari wanted to see how far he could get. The project was extremely ambitious: AirBnB worked with a big team and didn’t release any information on how they accomplished what they did. But that didn’t stop Ari from trying.

Building components from scratch allows you to pick and choose what works

"Right off the bat, I knew I couldn’t be as detailed as AirBnB. If you drew a button, their interface would even try to recognise which button from their component library it was. I settled for 4-5 bigger elements, like a picture with a text, a navigation bar, etc.," Ari says. "This was because I had to teach a neural network to recognise each element. To do this, I had to feed it thousands of pictures, and I had to draw all the pictures by hand."

Ari drew 20 pictures of each element. His girlfriend contributed a few more. Then Ari created a script that generated 100 copies of each picture, changing small details and flipping them around so they wouldn’t be identical. Eventually, his sample size was a bit over 1,000.

"I ended up re-teaching a neural network from Google that had already been taught to recognise things like planes, cats and dogs. It worked really well," Ari shares.

When challenges appear, creative problem-solving is needed

Around this time, Ari faced a problem: “I now had a neural network that recognised images with single elements. I had gotten so close. But the pictures I drew in meetings would have many elements in them. How could I make it recognise multiple elements from the same picture?”

It’s was if Ari had a neural network that recognised cats but wanted to feed it a picture with a cat and a dog. How could he stop the network from interpreting the entire picture as one creature?

Ari ended up solving his problem by finding a Python script that, with a few tweaks, would identify areas with borders and separate them as their own pictures. This program would chew the pictures first and spit the individual elements to the neural network.

"I could have continued drawing pictures with multiple elements, but that would have been an uphill battle. It would have taken so long to teach them to the network. Sometimes, the border between doable and almost impossible is really hard to recognise," Ari recollects.

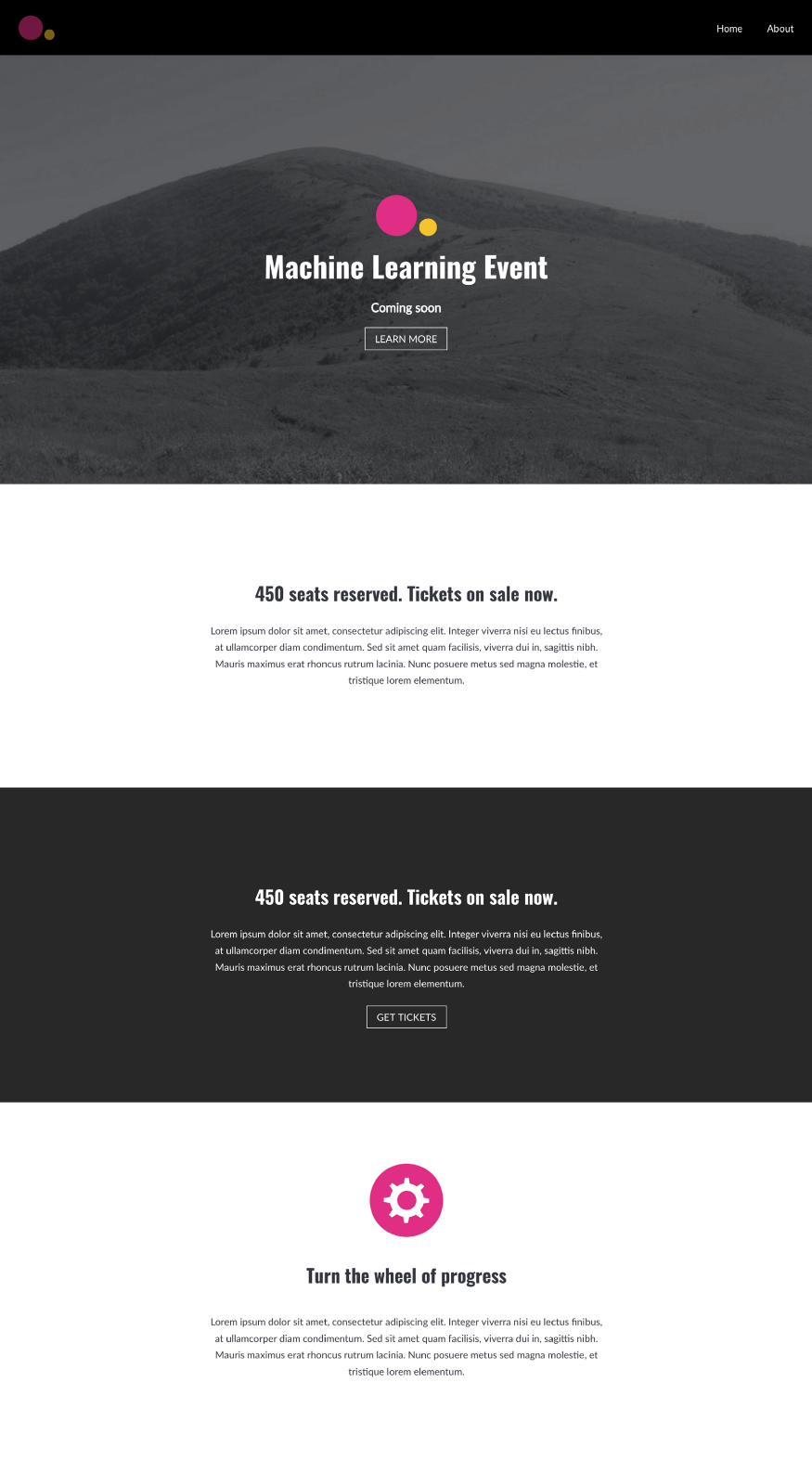

The end result proves that Ari’s ambitious dream could be a reality

Ari’s final result is a prototype with a lot of potential. Work still remains to make it function together with design systems, as intended.

"We definitely have Nordcloudians who could take this to the next level, especially given our expertise in design systems," Ari says.

Ari wasn’t ultimately able to implement the video camera feature he first had in mind. The current prototype uses pictures instead, meaning it doesn’t operate in real time.

"It’s somewhat of a mystery how well it would actually work," Ari says. "It would probably be best to leave its programming to someone with more AI experience."

All in all, Ari is happy with the results. He was able to prove that yes, the idea can be realised – even by one developer. "I also ended up getting a good grade for my thesis," Ari laughs.

Interested in design systems? Check out the Nordcloud Design Studio. And if you’re a design system enthusiast, why not join us?

Get in Touch.

Let’s discuss how we can help with your cloud journey. Our experts are standing by to talk about your migration, modernisation, development and skills challenges.